The evolution of IT

The evolution of software development over time and its role today

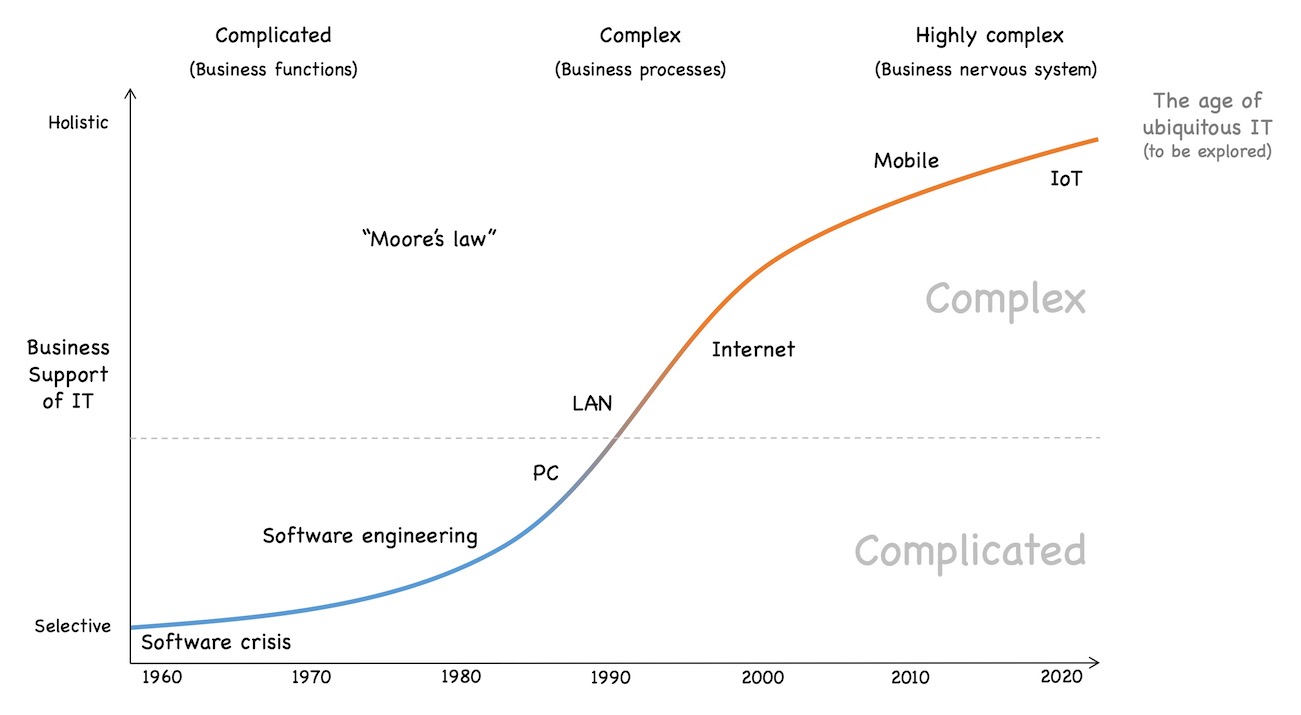

The evolution of IT

In a former post, I briefly described the evolution of markets because it influences the IT of today a lot, including the confusion we often experience. In this post, I discuss the evolution of IT itself, as this is also relevant to understand the current state and role of IT.

Once upon a time

In the beginning (long before we started calling IT “IT”), there were tube-based computer (omitting the short phase of mechanical computers). They were relatively unreliable and maintenance intensive. Thus, they were not interesting for commercial use.

This changed with transistors, that became widespread in the 1950s. Now, computers also became interesting for commercial purposes. Still, the focus of IT was on hardware. Hardware was the enabler, software just a byproduct.

This changed with the invention of 3GL languages that made software development a lot easier and a lot more productive. This plus continuously rising compute power gave software a huge boost. Around 1960, the perception of hardware and software started to reverse. Software became the focus of IT and hardware the byproduct.

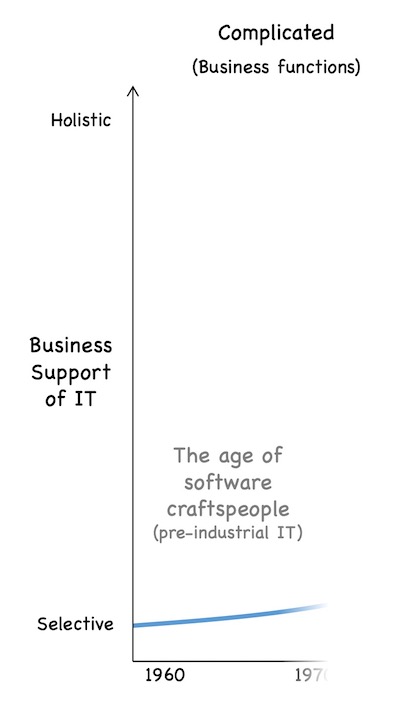

Pre-industrial software development

Compared to today, computers back then had very little compute power. Each mobile phone today (even the older ones) has several 1000 times the compute power of the computers back then. Thus, it was only possible to calculate a little report here and maybe a small analysis there – very small and limited functionalities from a business point of view.

Still, business people loved it. Remember that pocket calculators only become popular in the mid 1970s. Before, creating a report or alike meant paper, pen and slide ruler, i.e., tedious and error-prone work. The possibility to hand over this unloved work to computers was highly welcomed.

Therefore people wanted more software – more reports, more analyses, more tedious manual work handed over to computers. But software development was in a pre-industrial state at the time (see this post) for a brief explanation of pre-industrial, industrial and post-industrial), i.e, software development was mostly done by craftspeople 1 who holistically created the desired functionality.

If you were able to get hold of such a software craftsperson and explain them what you needed as a business person, everything was fine. Several days later you usually got exactly the software you needed.

But most of the times you were not able to get hold of anyone who could provide you with the software you wanted. Demand was really high and supply, i.e., software craftspeople were scarce.

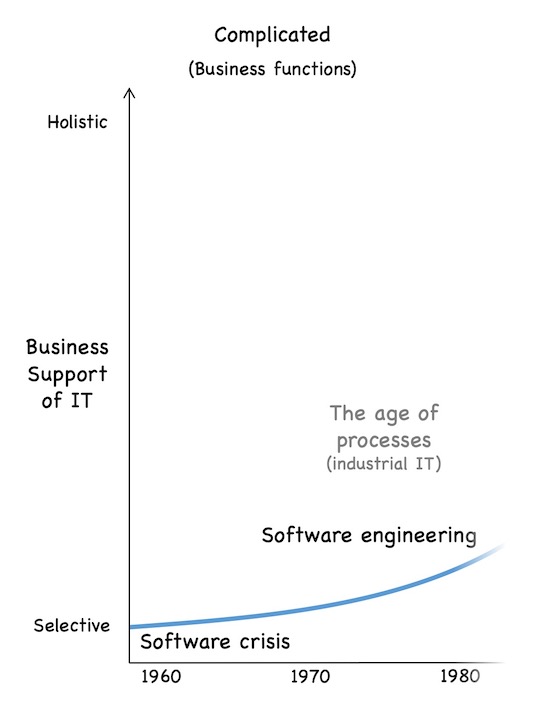

Software crisis and the rise of software engineering

This imbalance between demand and supply led to the beginning of the software crisis in the mid 1960s. Of course, the IT people tried to resolve the crisis. How can we scale software production in a cost-efficient way? they asked themselves. Hey, the same problem has been successfully solved in industrial production a few decades before, they realized. Thus, let us try to apply the same principles to IT.

Let us standardize the “product” software and its production process. Let us split up the production process in consecutive activities like business analysis, requirements engineering, architecture, design, implementation, test, assembly, deployment and connect them via a virtual assembly line called “software development process”.

Let us also standardize and split up the activities needed to operate the software in production and create different roles for the different activities. Now we do not craftspersons anymore that understand the whole process required to implement and operate software. Instead, experts for the different predefined activities are sufficient.

As communication takes place via predefined artifacts that are handed over from one “work station” to the next within the virtual assembly line (the software development process), those experts also do not need to understand what the other experts do. This is how software engineering arose. 2

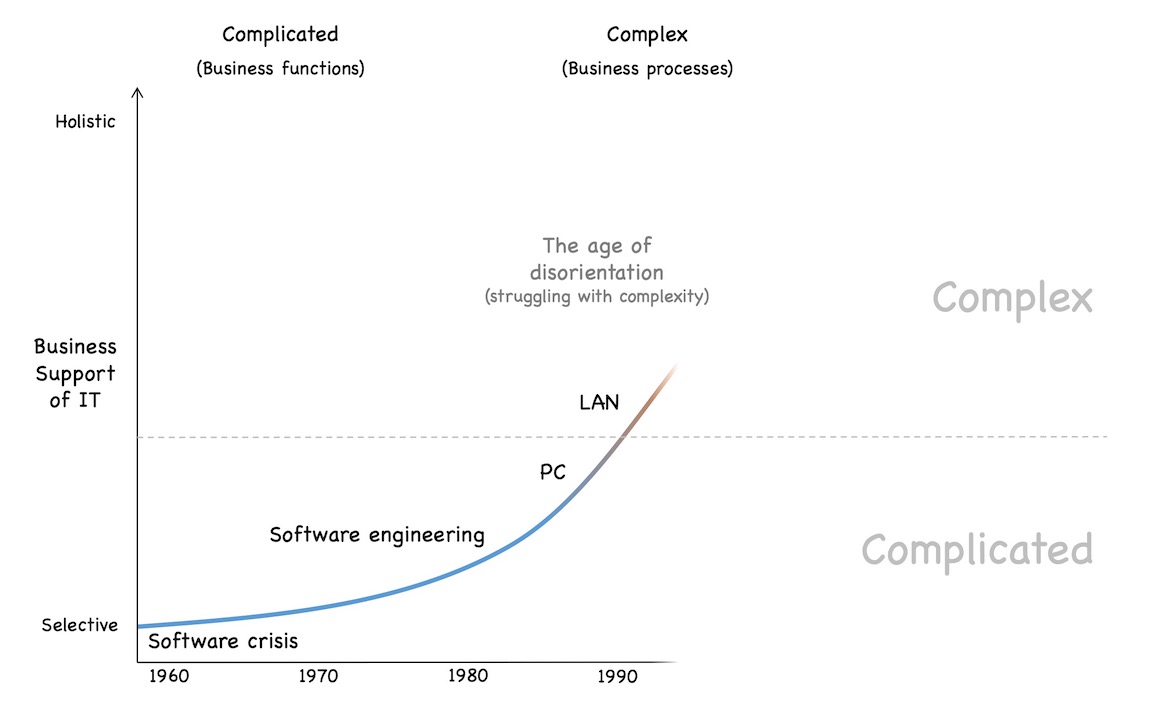

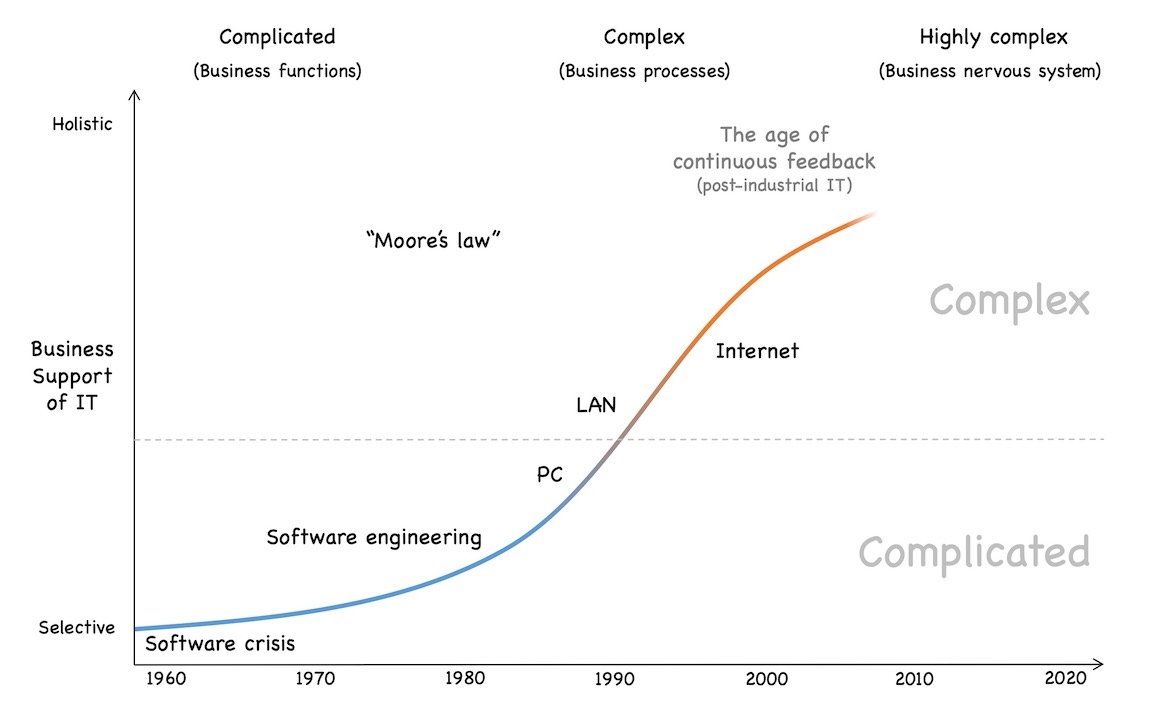

Crossing the line to complexity

This approach worked quite okay for a while. Sure, it had some issues but it was possible to scale software production by several orders of magnitude in a comparably cost-efficient way. The specialization on the operations side also made it possible to run the software in production in a quite cost-efficient way. 3

The hardware became more powerful. Mid-range computers were developed, then mini-computers, workstations and eventually personal computers. Additionally, networking spread and more and more computers became interconnected. As a result, it was not only possible to support limited business functions, but complete business processes (or at least parts of them).

This development changed the predominant characteristics of software development projects significantly. Business functions tend to be relatively stable over time and relatively detached from employee and customer interaction. Therefore, the task of implementing such a business function (like the aforementioned report) typically is complicated. 4

Business processes on the other hand are a lot closer to company and market interaction. Markets as well as companies are complex by nature. As a result, the task of implementing support for business processes typically is complex, too.

This gradual shift from complicated to complex had quite dramatic consequences regarding software development and all its functions. Responding to complexity requires very different means than traditional software engineering practices offered. This led to a lot of disorientation and distortions regarding software development in the 1980s and 1990s.

Most of the companies tried to answer the problems they faced with more of the “proven” software engineering practices, which did not help and eventually led to the rise of agile software development and its successors. I will come back to this gradual shift and its consequences in a later post. For now, let us just notice this switch and move on with the evolution of IT.

Towards a business nervous system

Moore’s law did its job and compute power kept rising every year. The Internet started its triumphant progress. Eventually, IT did not only support business processes anymore but it became the nervous system of the business: every product, every feature, every process, the whole company is encoded into software. The whole business DNA of every non-trivial business is encoded in its IT. Due to the effects of the digital transformation, additionally all interactions with the market, partners and suppliers are also encoded in IT.

Most companies have (reluctantly) accepted that, as a result, seamless IT operations are vital. These days, downtimes of just a few hours can quickly become life-threatening for a company.

But most companies have not yet realized that this evolution has another essential consequence: it is not possible anymore to launch a new product, tweak a process or customer interaction, add a new business feature or anything else without touching IT. As a consequence, you cannot move faster with your business than your IT can move.

In other words: IT has become a delimiting factor regarding the speed at which business can move. This is especially important if your company lives in a post-industrial market (again, see this post) for a brief explanation of post-industrial).

From now on

The evolution goes on. Mobile has changed customer interaction dramatically - even if most companies at least in Germany, where I live, have not yet realized its disruptive effects (I will come back to this topic in a later post). Cloud changes the way we consume IT resources. The digital transformation changes our customer interaction patterns and we can only speculate about the effects IoT will have (e.g., ubiquitous computing, contextual computing, and a lot more).

In the end, IT will most likely become an even more essential aspect of business. Business and IT will become intertwined more and more.

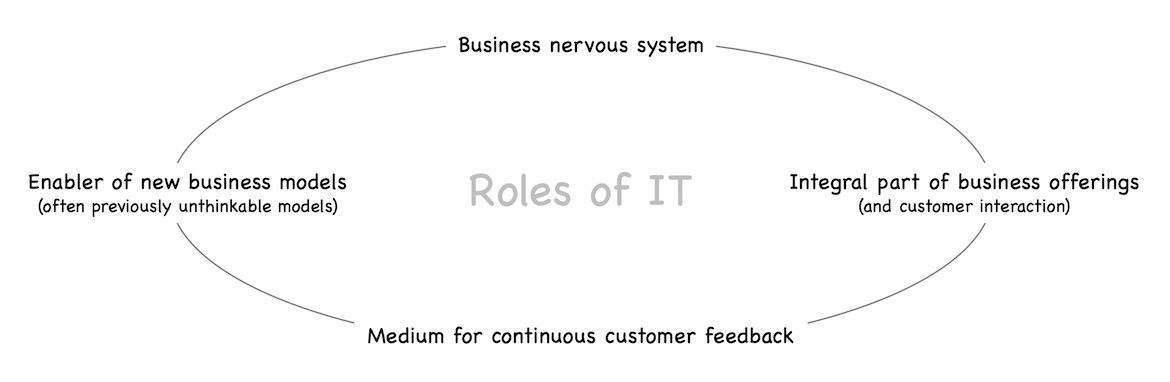

The role of IT today

In quite some companies IT is still considered a “cost center”, a function that is needed but does not create business value, like, e.g., accounting 5. If we look at the evolution of IT and its current state (I will discuss this in more detail in a later post), we can observe (at least) 4 roles that IT today has:

- IT is the business nervous system of every non-trivial company. We have discussed that in the previous sections of this post.

- Due to its continuous technological progress, IT acts as an enabler of new, previously often unthinkable business models – some of them being disruptive.

- As a result of the advancing digital transformation, IT becomes an integral part of business offerings, often also defining the customer interaction.

- IT is the primary medium for the continuous customer feedback loop that you need to be successful in a post-industrial market.

Probably there are more roles that today’s IT holds, but just looking at the 4 roles mentioned here, it should be clear that IT no longer is a “cost center”. Maybe IT was a cost center 30-40 years ago, but today IT plays very different roles. IT is not only very different from the IT 30-40 years ago, IT also supports a very different business than it did at the time – which is often overlooked.

Therefore, we need to re-think IT in its wholeness. The traditional ideas of software engineering are not bad, but they solve a completely different problem than we face today. I will write about this re-thinking of IT in this blog a lot. But for today, I would like to leave it here.

-

Opposed to craftsmanship in the 19th century and before, a lot of women were software developers in the pre-industrial times of software development. The drop of women in software development that we still suffer from in many countries started a lot later. ↩︎

-

Of course, this is a drastically simplified a posteriori description of the development of software engineering. Very likely nobody back then thought the ideas in the way I described them here. Instead, these ideas and concepts developed over a longer period of time. Nevertheless, in hindsight this short reasoning sums up quite exactly what happened. ↩︎

-

On a side note, the software crisis never ended. The demand for more software always was bigger than the supply, even with the huge boost of productivity due to software engineering. After approx. 20 years of continuous software crisis, the crisis was simply declared to have ended - probably because it is not very representative being in a continuous state of crisis and not being able to solve the crisis. ↩︎

-

I will probably write a post later that explains the characteristics of complicated systems vs. complex systems and then add the link here. Until then, the Wikipedia page for Cynefin or the Cynefin introduction by Dave Snowden, one of the inventors of Cynefin, are good starting points to understand the difference between complicated and complex. ↩︎

-

Sometimes I ask myself who creates less business value: The people in accounting or the persons, who usually claim that the people in accounting do not create business value. But that’s a different discussion … ↩︎

Share this post

Twitter

Google+

Facebook

Reddit

LinkedIn

StumbleUpon

Pinterest

Email